I never got in-depth training in any one research method, despite working in applied research, in one way or another, for over a dozen years. I have a patchwork background in journalism; some quant classes; training in service design and oral history; on-the-job lessons from smart colleagues with expertise in UX, evaluation, and information visualization. A couple degrees, with a through-line only I can draw.

Then, I got an illness in which every patient, no matter how Google-averse, no matter how little high school biology they remember, is more informed than most clinicians and researchers by dint of being a roving, self-monitoring, research site, a being that cannot help but gather data and seek patterns in self-preservation. My survival was dependent on the all-consuming investigation of my spiralling health, remaining up-to-date on a quickly moving field, and knowledge translation of my findings to my fumbling medical team, my caregivers, and the public health system. I was the best source of both data and analysis for an illness too new to have robust clinical guidelines.

There’s downsides to this methodological sluttiness, including never knowing which academic journals to publish in, but also an agility, a way of thinking about problems, an imperviousness to procedure and formality for its own sake, an openness to messy, multidisciplinary data and willingness to use the best tools for the job. I’ve honed a kind of dialectic and systems investigation; a flexible, mixed methods approach that is different every time; and an ability to ask the right questions, see the whole from parts and tell evocative, actionable, true stories.

The Canadian Institute for Health Research defines patient engagement in research as the full agentic spectrum: “when patients meaningfully and actively collaborate in the governance, priority setting, and conduct of research, as well as in the summarizing, distributing, sharing, and applying its resulting knowledge.” Elizabeth Manafo et al argue that its rise reflects “a growing desire for more ethical, democratic or moral healthcare practices…a more informed and accountable research agenda”. It’s a move away from “health discipline paternalism” to “patient-centered care”; the patient as active stakeholder, not passive recipient of expertise. In some schools of thought, “patient” broadens beyond the individual to their families, non-medical caregivers, and the organizations that advocate for them.

Nowhere in these engagement models is a category for the patient who was (or is) a researcher, clinician, or science communicator, borrowing those skills to understand first-hand problems. Second on Manafo et al’s list of Successful Engagement Approaches, after “engage early and continuously”, is clear, separate roles and expectations for patients and researchers. But some of the best and most devastatingly accurate work of the pandemic has come from scientists and science educators like Dr Raven Baxter (“the Science Maven") and Dr Dianna Cowern (“Physics Girl”, who put their bodies on the line, and on their large, professional, video platforms, to explain intimately and honestly exactly what was happening to them, in real time.

Rafal Swierzewski, a cancer survivor and biochemist, argues that patient experience can help direct where, in the “broad ocean of ‘unmet medical needs’”, life sciences and medical research should focus. Not just as clinical trial participants or vague advisory roles but serving on Bioethics Committees, developing Risk Management Plans, conducting safety assessments, designing trial protocols, drafting informational materials and consent forms, and co-authoring papers, with greater and more specialist involvement possible in accordance with patient skills and training. I’d like to see patients also as paid reviewers for journals, assessing both methodology and findings, and on media editorial boards, before poor headlines go viral on poor research.

My experience as a patient-researcher includes government expert panels; advisory roles on research networks; patient-led research; and hypothesis testing with individual clinicians, including my own. When I got sick, I signed up for every study, twisting my brain to exhaustion on virtual cognitive tests and filling out long symptom checklists that missed whole categories of complications. Some screened me out: not a priority population for testing early in the pandemic; negative by antibody by the time supply chain shortages allowed access to necessary chemicals and centrifuge vessels.

These days, my personal participation rubric requires either access to new diagnostic tests; formats in which I am a researcher and policy advisor first, patient second, with direct influence on research design and conclusions; or a high ratio between me and the number of clinicians I can efficiently educate. I’ve lost patience with a lot of research, which spends exorbitant money proving basic mechanistic things patients deduced years ago, or coming to flawed conclusions on prevalence or symptomatology.

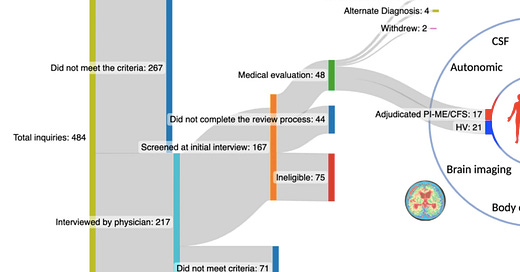

Often, it’s loomed over by a kind of diagnostic overshadowing, a misunderstanding of the correlation between mental and physical health too tightly wound into the researchers’ medical paradigm to undo. From this, we get studies like the recent National Institute of Health study on post-infectious ME/CFS. Seven years, seventeen participants, $8 million dollars, intentionally excluding patients with comorbidities, deconditioning, and those whose illness was too serious to participate overnight.

The study found ample biomedical evidence in the immune, nervous, cardiovascular, and metabolic systems likely to impact energy and exercise capacity: higher levels of naive B cells and lower switched-on memory cells, alterations in gene expression in the peripheral blood mononuclear cells and metabolic pathways, lower heart rate variability and increased resting heart rate, longer blood pressure recover times, decreased blood-oxygen level dependent signalling in key brain junctions, and reduced cerebrospinal fluid catechols. But the researchers concluded that because patients showed no signs of mechanical inefficiency, muscle oxygenation, or muscle fiber composition (a finding, incidentally, that is belied by other, more rigorous, research), they were misinterpreting their own data and risk, developing lower “effort preference”, a term more typically used in depression research. “Rather than physical exhaustion or a lack of motivation, says Brian Walitt, first author and rheumatologist, “fatigue may arise from a mismatch between what someone thinks they can achieve and what their bodies perform” and through this error cause measurable downstream effects.

“Scientists tend to favor simplicity”, says Christine Yu, sports and health journalist and author of Up To Speed: The Groundbreaking Silence of Women Athletes, in an interview with Anne-Helen Petersen. “Especially when the goal is to understand complex physiological, molecular, and chemical behaviour. They want to eliminate as many extraneous factors as possible to reduce the “noise”….That way, when they look at the data, they can more easily pinpoint – ah, this is the thing that influencing the outcome or that’s making the difference…That’s why scientists recruit a standardized cohort of participants, one that’s relatively controlled and with few external variables.”

The NIH’s conclusion is so fancifully simplistic a metaphor, so out of line with patient experience, it has become a meme. Joke posts play on the history of “work-shy” or “languishing”: the racist, misogynist, ableist assumption that every body can exert the same military-grade effort to sustain the body politic, if the mind is strong enough. Patients document themselves in medical-grade dire straights with the ironic caption “effort preference”. It is a problem, methodologically, when your participants find your conclusions more funny than useful, or when only 3.5% of interested participants met your eligibility criteria in a study intended to develop a “pure” universal phenotype that ignores the illnesses’ inherent complexity.

Across all sectors of the workforce, but especially public-facing roles, long-term sickness has increased, and with that lost workers, efficiencies, and institutional knowledge. In Germany, absences shaved 0.8% off of growth, with a record high of illness days in 2023. In Canada, the rate of people with disabilities increased from 22% in 2017 to 27% in 2022, representing 6.2 million people total. The biggest increase was in what Statistics Canada calls “mental health disabilities” but would more accurately be described as sensory or cognitive disabilities, including seeing, learning, and memory. In the US, the Census Bureau says that more working age Americans report serious cognitive issues (e.g., remembering, concentrating, or making decisions) than anytime since the Bureau first started surveying on it 15 years ago.

For Canadian workers who remained on the job, productivity has fallen for six consecutive quarters. Work hours, in a statistical sense, are costing people more and earning the economy less, regardless of their, or their employer’s effort preference. When I used to manage teams of economists, I’d joke that they sometimes forgot that workers are people, and people are human, with vulnerable bodies poorly protected by economic forces. Similarly, medical researchers often forget that patients are people, with whole lives outside of and prior to their illness, pertinent expertise beyond it, and the ability to interpret their own individual and collective data. “Our bodies don’t live in a vacuum as bones, muscles, ligaments, etc,” says Yu.

Monsters are named for reputation, for where they were last seen, where they least resemble the human, their most menacing or vulnerable parts, that which gives would-be hunters clout or sets their price on open market. Lovers are named for their softest form: a diminutive containment, a metonymy of fondness, the least letters to spell affection. Illnesses are named eponymously for their inventor (e.g. Addison disease), or their mechanism of action (cancer, from the Greek for crab, and its pincer-like projections across the body, or any inflammatory -itis). More rarely, they are named for a famous patient (Lou Gehrig’s disease). In 1975, a Canadian National Institutes of Health conference on the naming of diseases concluded the possessive should not be used for physician-named diseases, “since the author neither had nor owned the disorder” but the apostrophe in Gehrig’s still stands.

Patients named Long COVID to underline its viral origin story, ongoing nature, and indefinable end-point, to own the narrative of the disorder disordering our lives. We got sick, and no one could tell us if or when we would get better, or worse. Four years later, the best analysis I’ve seen still comes from patients tracking and narrating their own health trajectory, comparing notes and surveying each other; the best larger-scale research that which picks up on a kernel of insight from pooled patient experience or from patient-researchers themselves.

There is supposedly a central hospital records system in my province that tells the linear story of my illness: how I reported it to my medical team and what they observed, the medical interventions we trialed and their outcomes. I wouldn’t know, because as a patient I only have access to individual hospital portals, with varying levels of detail. Some have test results, others only allow access through a third party provider; some are only a one-way secure messaging platform. My full patient records are only accessible by access to information request, for a fee. But I know that that narrative is deeply flawed, because I was in no state to comprehend and communicate my experience, my medical team was not asking the right questions, and the entire enterprise is heading into Year 5 of pandemic staffing shortages and both systemic and individual strain. I’ve been on the receiving end of more disastrous errors in documentation – the wrong test or dosage ordered, lost referrals gumming up phone lines that are no longer staffed – since the pandemic started, than in a lifetime of medical appointments.

My personal records are painstaking and incomplete, a mixed method mess of stolen doctors notes, test results, at-home biometric and symptom tracking, screenshots of discussions from patient forums, diagrams and data visualization, hypotheses and self-experiments. And this project, itself an exercise in narration as journey mapping and systems analysis. I believe so deeply that patient narrative is a better research method than most surveys or medical record studies because the data gaps and biases in the later are rarely visible to non-patients. And one could get an entire PhD in discourse analysis, qualitative research methods, and oral history just from managing and interpreting your own flawed patient file and data. Lucia Lorenzi writes, about tracing the dropped balls in her own files: “always do your own follow up, never assume faxes get read, never assume anything, be your own scribe, do it all yourself, nobody is looking out for you but you.”

We desperately need new and different kinds of research for Long COVID, in both method and metaphor. Catherine Yu, in the same interview, says “I used to skip over the methodology section to get to the good stuff – the results and discussion. As a non-scientist, I didn’t necessarily care about how the study was set up because I wasn’t going to replicate it. I generally assumed the researchers knew what they were doing and were using up-to-date and rigorous protocols. But methodology matters. It creates the universe in which we understand research findings, whether or not they are applicable and relevant and for what population of people”

One of the problems of (studying, living with, treating) this kind of illness is that it is, at heart, an unbalancing of necessary stabilizing processes in the body: the sympathetic and parasympathetic nervous systems, nutrients and minerals, hormones and enzymes, receptors and channels, bodily processes that are supposed to be a responsive pendulum or appropriately circadian. Left unchecked, or faced with a poorly chosen intervention, they can spiral into the unspeakably worse. But most medical interventions are tested on the otherwise healthy, the straightforwardly sick, so as to better pinpoint effect, avoiding convolutions like pregnancy or comorbidities.

Yu says, “It wasn’t until 1993 that the National Institutes of Health (NIH) Revitalization Act mandated the inclusion of women and minorities in NIH-funded clinical research. It’s only been since 2016 that scientists have been required to account for sex as a biological variable in preclinical research and human studies. What that means is that the funding has historically flowed more easily to studies investigating male cells, animals, and humans.”

Today, mining patient discussions yields far more accurate data on illness-specific side effects, dosage, and the risks and benefits of polypharmacy, than anything companies are obliged to report from testing. An 8-patient Yale study on guanfacine and NAC becomes thousands of patients comparing notes online, adjusting the protocol, and making educated guesses as to the impact of their unique pathophysiology on experiment outcomes. Most of what is currently recommended to clinicians to recommend their patients, is off-label usage, meaning a usage it is not currently approved for by the country’s oversight body, no rigorous, relevant testing data that meets the threshold of the FDA or Health Canada.

Complex illnesses demand complex methodology and complex solutions, a higher effort preference on the parts of funded researchers, and meticulous self-reflection on their own (and their field’s) biases. A problem solved by, or, at least with, the people who know it best.

Great analysis of this supposedly vital study. It’s such a shame that medical research and patient experience aren’t more connected - in ME/CFS in particular there is an extraordinary gap between what the medical system can offer and the proliferation of patient led wisdom on recovery

Excellent article. Thank you.